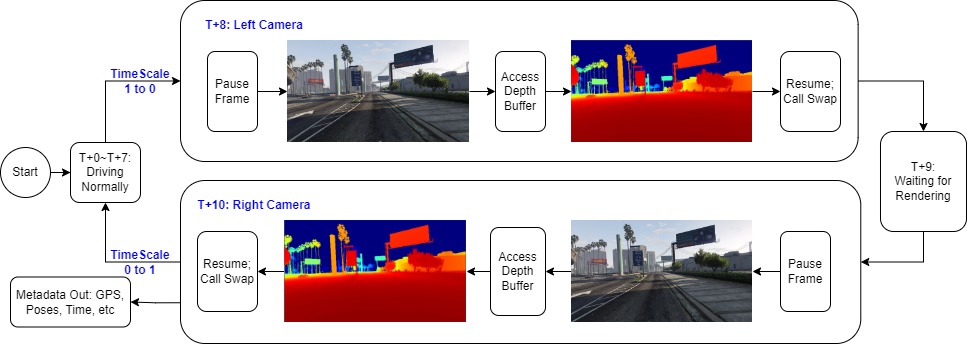

VR Remote Driving System (US 10,880,355 B2)

US & CN Patents VR streaming · WebRTC/RTMP/RTSP · 360° Camera · Robotics

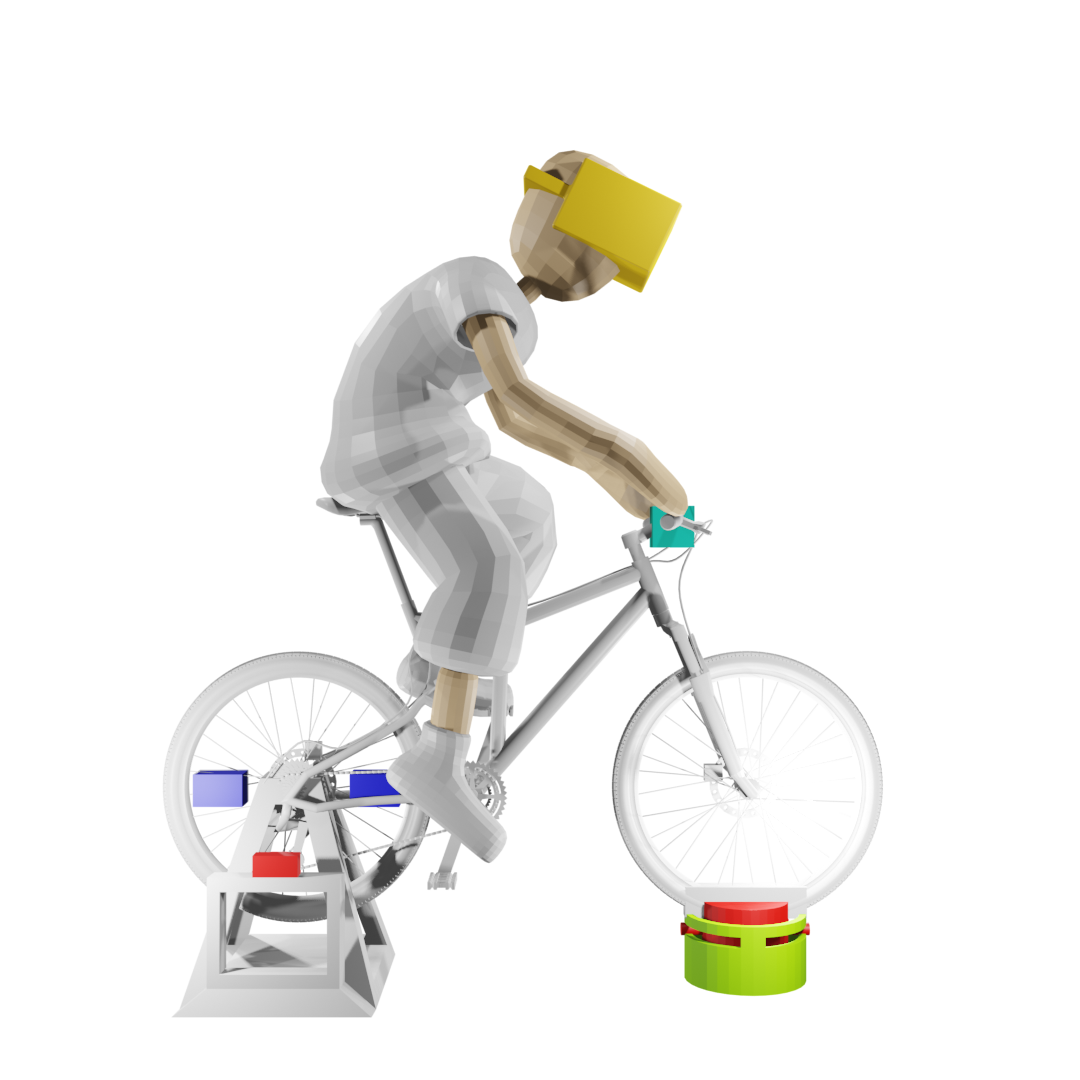

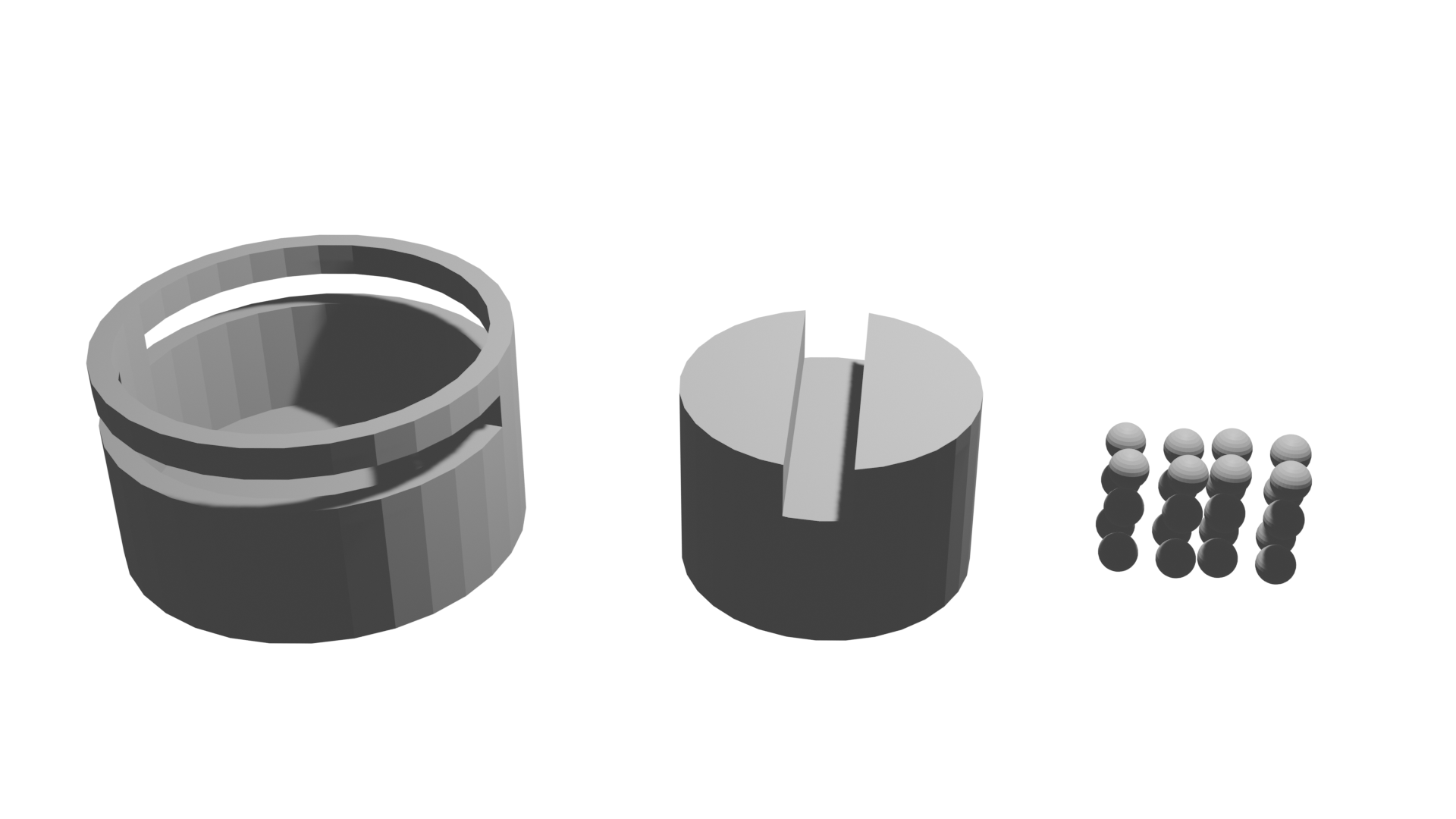

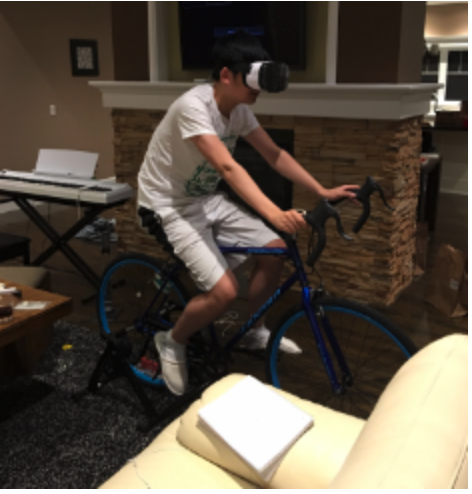

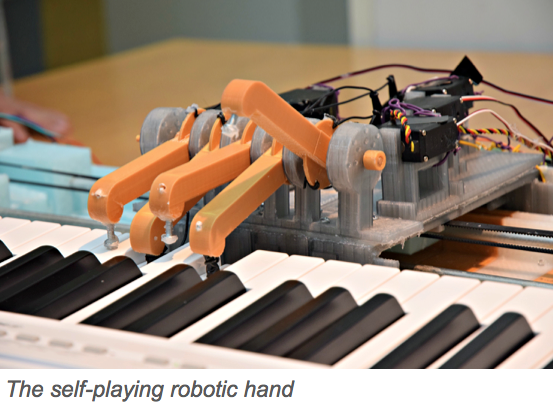

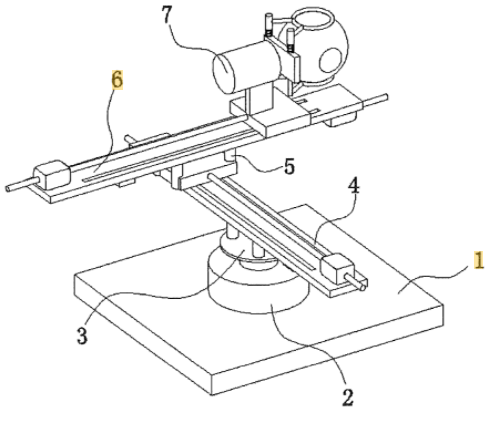

A VR teleoperation system that drives a real vehicle from inside a reconstructed virtual environment using a 360° camera, physical wheel/pedal rig, and Wi-Fi/cellular link. Integrates WebRTC-over-RTMP to minimise streaming latency and a robotic-arm rig to synchronise the operator's body movement with the vehicle.